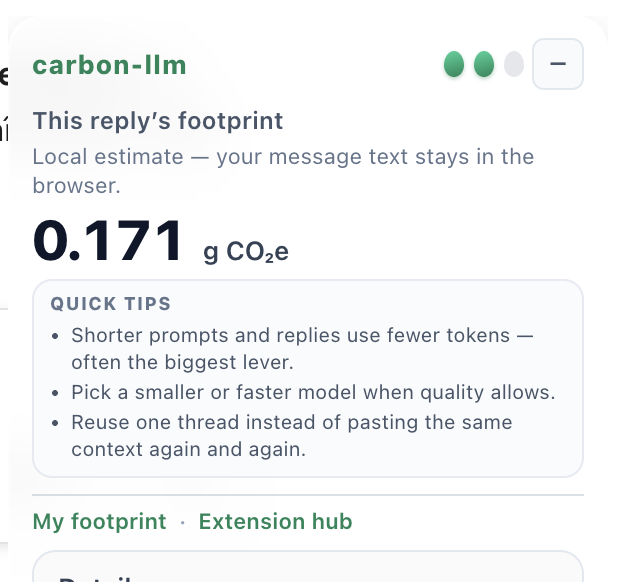

Chrome extension for web chat UIs

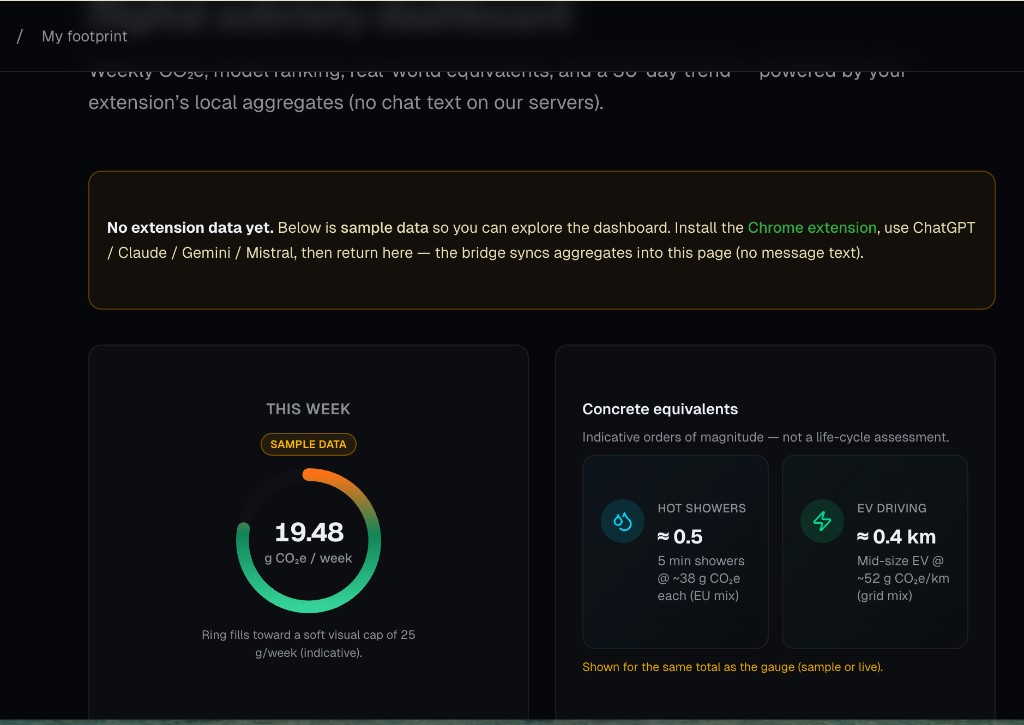

See CO₂ per chat reply in minutes—same POST /api/v1/estimate as production, model + tokens only, prompts never leave your browser, no account. When you embed LLMs for customers, sign up free for /track, API keys, and dashboards—that path is for integration, not for trying the estimate.

Build from source (steps below)—no account. Free account when you ship /track or need keys and the dashboard.

Install (from source)

A Chrome Web Store build is planned. Today you load the unpacked folder from the repository:

- Clone or download the carbon-llm repository.

- At the repo root, run

npm cithennpm run build:extension. - Chrome → Extensions → Developer mode → Load unpacked → select

extensions/chrome-llm-carbon/(folder containingmanifest.json).

Technical README: in your clone, see extensions/chrome-llm-carbon/README.md — platform quirks and limits are documented there.

The extension intercepts provider responses to read token usage, then calls POST /api/v1/estimate on carbon-llm. It complements the REST API for teams who want transparency before wiring server-side /track.

My footprint (extension users) is separate from the API dashboard for integrations.

Methodology and confidence labels match PDF exports and the API.